Project and Code Update Summary

Deep Layer Knowing Neural Network (DLKNN)

The Deep Layer Knowing Neural Network (DLKNN) integrates multiple cognitive and consciousness dimensions inspired by frameworks from cognitive science, neuroscience, spirituality, and philosophy. The model leverages custom neural layers to represent various states of awareness, knowledge, and consciousness, providing a sophisticated tool for consciousness research.

Key Concepts

- Custom Neurons:

- Awareness Neurons: These neurons are designed to model awareness by calculating the average of the input values and applying an activation function. This mimics how awareness might integrate information from various sources.

- Reflective Neurons: These neurons handle input dimension mismatches by padding and applying an activation function. This simulates the reflective process of adjusting to different perspectives and dimensions of information.

- Intentional Neurons: These neurons incorporate a goal-directed intention by combining input values with a predefined goal. This reflects the intentional aspect of cognition, where certain thoughts and actions are directed towards achieving specific goals.

- Emotional Neurons: These neurons model emotional processing by averaging input values and applying an activation function. This represents how emotions can modulate and influence cognitive processes.

- Network Architecture:

- The architecture includes layers representing different cognitive and consciousness states, such as Levels of Knowing, Levels of Knowledge, Levels of Consciousness, Levels of Unknowing, Levels of Unknowns, Levels of Unconsciousness, Wilber’s Altitudes, and Aurobindo’s Integral Yoga Stages.

- Each layer is assigned specific units and colors to represent different states, providing a visual and structural organization of the network.

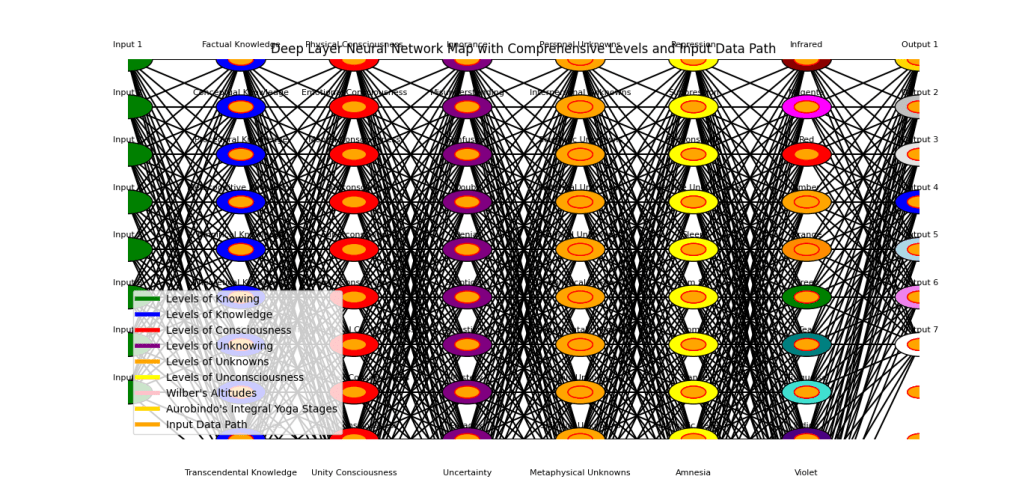

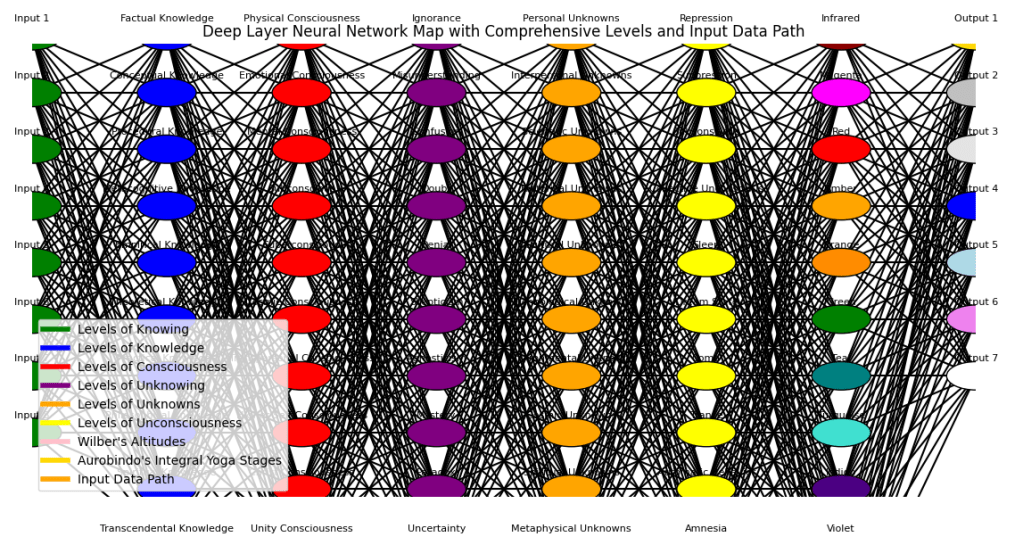

- Visualization:

- The DLKNN includes a comprehensive neural network map that visualizes the architecture, including the paths of input data.

- The map includes legends to identify various levels and layers, aiding interpretation and understanding of the network structure.

- Training and Classification:

- The model is trained using the Adam optimizer and categorical cross-entropy loss, common in neural network training.

- The

classify_inputsfunction classifies new input data using the trained model, predicting various classes that represent different cognitive and consciousness states.

- Model Saving and Loading:

- The trained model can be saved in the Keras format for future use, ensuring that the trained parameters and architecture are preserved.

- Functions are provided to load the trained model and classify new inputs, making the model practical and reusable.

How It Works

- Model Initialization:

- The model is initialized with an input dimension (e.g., 100) and an output dimension (e.g., 10).

- Custom layers are added to the model, each representing a different aspect of cognition or consciousness. These layers include the AwarenessLayer, ReflectiveLayer, IntentionalLayer, and EmotionalLayer.

- Training:

- The model is trained on a dataset where input data represents various features of cognitive processes, and output data represents the different cognitive and consciousness states.

- The model learns the patterns and relationships between the input features and the output states through backpropagation and optimization.

- Classification:

- Once trained, the model can classify new input data. The

classify_inputsfunction takes new input data, passes it through the network, and returns the predicted classes. - The predicted classes represent the different states of awareness, knowledge, and consciousness that the input data corresponds to.

- Once trained, the model can classify new input data. The

- Visualization:

- The neural network map visualizes the layers, neurons, and connections within the DLKNN.

- Input data paths are also visualized, showing how data flows through the network and influences the predictions.

- Saving and Loading:

- The trained model is saved to a file (

LargeKnowingModel-noalgonlp.keras), preserving the trained parameters and architecture. - The model can be loaded later to classify new data without retraining, making it practical for ongoing research and application.

- The trained model is saved to a file (

Code for DLKNN with input data visualization

A Python Script for DLKNN (change the model file path to yourname)

import matplotlib.pyplot as plt

import networkx as nx

import numpy as np

import tensorflow as tf

# Function to create a scalable model

def create_expandable_model(input_dim, output_dim, layer_configs):

model = tf.keras.Sequential()

model.add(tf.keras.Input(shape=(input_dim,)))

for i, config in enumerate(layer_configs):

layer_name = f"{config['name']}_{i}"

model.add(tf.keras.layers.Dense(units=config['units'], activation='relu', name=layer_name))

model.add(tf.keras.layers.Dense(units=output_dim, activation='softmax', name='Output_Layer'))

model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

return model

# Layer configurations

layer_configs = [

{'name': 'Awareness', 'units': 32, 'color': 'green'},

{'name': 'Recognition', 'units': 32, 'color': 'green'},

{'name': 'Understanding', 'units': 64, 'color': 'green'},

{'name': 'Intuition', 'units': 64, 'color': 'green'},

{'name': 'Insight', 'units': 128, 'color': 'green'},

{'name': 'Realization', 'units': 128, 'color': 'green'},

{'name': 'Wisdom', 'units': 256, 'color': 'green'},

{'name': 'Enlightenment', 'units': 256, 'color': 'green'},

{'name': 'Factual_Knowledge', 'units': 64, 'color': 'blue'},

{'name': 'Conceptual_Knowledge', 'units': 64, 'color': 'blue'},

{'name': 'Procedural_Knowledge', 'units': 128, 'color': 'blue'},

{'name': 'Metacognitive_Knowledge', 'units': 128, 'color': 'blue'},

{'name': 'Empirical_Knowledge', 'units': 256, 'color': 'blue'},

{'name': 'Theoretical_Knowledge', 'units': 256, 'color': 'blue'},

{'name': 'Practical_Knowledge', 'units': 512, 'color': 'blue'},

{'name': 'Philosophical_Knowledge', 'units': 512, 'color': 'blue'},

{'name': 'Spiritual_Knowledge', 'units': 1024, 'color': 'blue'},

{'name': 'Transcendental_Knowledge', 'units': 1024, 'color': 'blue'},

{'name': 'Physical_Consciousness', 'units': 64, 'color': 'red'},

{'name': 'Emotional_Consciousness', 'units': 128, 'color': 'red'},

{'name': 'Mental_Consciousness', 'units': 256, 'color': 'red'},

{'name': 'Subconscious', 'units': 128, 'color': 'red'},

{'name': 'Superconscious', 'units': 64, 'color': 'red'},

{'name': 'Cosmic_Consciousness', 'units': 32, 'color': 'red'},

{'name': 'Transpersonal_Consciousness', 'units': 16, 'color': 'red'},

{'name': 'Higher_Self_Consciousness', 'units': 8, 'color': 'red'},

{'name': 'Christ_Consciousness', 'units': 4, 'color': 'red'},

{'name': 'Unity_Consciousness', 'units': 2, 'color': 'red'},

{'name': 'Non_Dual_Consciousness', 'units': 1, 'color': 'red'},

{'name': 'Infrared_Archaic', 'units': 32, 'color': 'darkred'},

{'name': 'Magenta_Magic', 'units': 32, 'color': 'magenta'},

{'name': 'Red_Power', 'units': 64, 'color': 'red'},

{'name': 'Amber_Mythic', 'units': 64, 'color': 'orange'},

{'name': 'Orange_Scientific', 'units': 128, 'color': 'darkorange'},

{'name': 'Green_Sensitive', 'units': 128, 'color': 'green'},

{'name': 'Teal_Integrative', 'units': 256, 'color': 'teal'},

{'name': 'Turquoise_Holistic', 'units': 256, 'color': 'turquoise'},

{'name': 'Indigo_HigherMind', 'units': 64, 'color': 'indigo'},

{'name': 'Violet_IlluminatedMind', 'units': 128, 'color': 'blueviolet'},

{'name': 'Ultraviolet_Overmind', 'units': 256, 'color': 'purple'},

{'name': 'ClearLight_Supermind', 'units': 512, 'color': 'white'},

{'name': 'Psychic_Being', 'units': 64, 'color': '#FFD700'},

{'name': 'Spiritual_Mental_Being', 'units': 128, 'color': '#C0C0C0'},

{'name': 'Higher_Mental_Being', 'units': 256, 'color': '#E5E4E2'},

{'name': 'Illumined_Mind', 'units': 512, 'color': 'blue'},

{'name': 'Intuitive_Mind', 'units': 1024, 'color': 'lightblue'},

{'name': 'Overmind', 'units': 2048, 'color': 'violet'},

{'name': 'Supermind', 'units': 4096, 'color': 'white'}

]

# Define input dimension

input_dim = 100

output_dim = 10 # Example output dimension

# Create the model

model = create_expandable_model(input_dim, output_dim, layer_configs)

# Load the trained DLKNN model (replace with actual model path)

model_path = 'C:\\Users\\yourname\\Downloads\\LargeKnowingModel_NLP.keras'

model = tf.keras.models.load_model(model_path)

# Function to classify inputs using the trained model

def classify_inputs(model, input_data):

predictions = model.predict(input_data)

predicted_classes = np.argmax(predictions, axis=1)

return predicted_classes

# Function to draw the neural network

def draw_neural_network(ax, layers, labels, colors, input_data=None):

v_spacing = 1.5 / float(max(layers))

h_spacing = 1.0 / float(len(layers) - 1)

radius = v_spacing / 4

for i, (layer, label_group, color_group) in enumerate(zip(layers, labels, colors)):

for j in range(layer):

circle = plt.Circle((i * h_spacing, 1 - j * v_spacing), radius, color=color_group[j], ec='k', zorder=4)

ax.add_artist(circle)

if i == 0:

label = f'Input {j+1}'

elif i == len(layers) - 1:

label = f'Output {j+1}'

else:

label = label_group[j]

ax.text(i * h_spacing, 1 - j * v_spacing + radius, label, ha='center', fontsize=8)

for i, (layer_a, layer_b) in enumerate(zip(layers[:-1], layers[1:])):

for j in range(layer_a):

for k in range(layer_b):

line = plt.Line2D([i * h_spacing, (i + 1) * h_spacing],

[1 - j * v_spacing, 1 - k * v_spacing], c='k')

ax.add_artist(line)

# Draw the path of the input data

if input_data is not None:

for data_point in input_data:

x_pos = 0

for i, value in enumerate(data_point):

if i % 10 == 0:

x_pos += 1

y_pos = 1 - (i % 10) * v_spacing

circle = plt.Circle((x_pos * h_spacing, y_pos), radius / 2, color='orange', ec='r', zorder=5)

ax.add_artist(circle)

# Visualization of the neural network

fig = plt.figure(figsize=(20, 12))

ax = fig.gca()

ax.axis('off')

# Define the number of neurons in each layer and their labels

layers = [8, 10, 11, 11, 11, 11, 12, 7]

labels = [

["Awareness", "Recognition", "Understanding", "Intuition", "Insight", "Realization", "Wisdom", "Enlightenment"],

["Factual Knowledge", "Conceptual Knowledge", "Procedural Knowledge", "Metacognitive Knowledge", "Empirical Knowledge", "Theoretical Knowledge", "Practical Knowledge", "Philosophical Knowledge", "Spiritual Knowledge", "Transcendental Knowledge"],

["Physical Consciousness", "Emotional Consciousness", "Mental Consciousness", "Subconscious", "Superconscious", "Cosmic Consciousness", "Transpersonal Consciousness", "Higher Self Consciousness", "Christ Consciousness", "Unity Consciousness", "Non-Dual Consciousness"],

["Ignorance", "Misunderstanding", "Confusion", "Doubt", "Denial", "Skepticism", "Agnosticism", "Mystery", "Paradox", "Uncertainty", "Unawareness"],

["Personal Unknowns", "Interpersonal Unknowns", "Scientific Unknowns", "Historical Unknowns", "Cultural Unknowns", "Technological Unknowns", "Environmental Unknowns", "Cosmic Unknowns", "Spiritual Unknowns", "Metaphysical Unknowns", "Philosophical Unknowns"],

["Repression", "Suppression", "Subconscious", "Collective Unconscious", "Sleep", "Dream State", "Coma", "Trance", "Automatic Behavior", "Amnesia", "Psychological Blind Spots"],

["Infrared", "Magenta", "Red", "Amber", "Orange", "Green", "Teal", "Turquoise", "Indigo", "Violet", "Ultraviolet", "Clear Light"],

["Psychic Being", "Spiritual Mental Being", "Higher Mental Being", "Illumined Mind", "Intuitive Mind", "Overmind", "Supermind"]

]

# Define the colors for each layer

colors = [

['green'] * 8,

['blue'] * 10,

['red'] * 11,

['purple'] * 11,

['orange'] * 11,

['yellow'] * 11,

['darkred', 'magenta', 'red', 'orange', 'darkorange', 'green', 'teal', 'turquoise', 'indigo', 'blueviolet', 'purple', 'white'],

['#FFD700', '#C0C0C0', '#E5E4E2', 'blue', 'lightblue', 'violet', 'white']

]

# Example of new input data (replace with your actual data)

new_input_data = np.array([

[0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0],

[0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0],

[1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1]

], dtype=np.float32)

# Classify the new input data

predicted_classes_new_input = classify_inputs(model, new_input_data)

# Print the predicted classes for the new input data

print("Predicted classes for new input data:", predicted_classes_new_input)

# Visualization of the neural network with input data path

draw_neural_network(ax, layers, labels, colors, input_data=new_input_data)

# Add legend

input_patch = plt.Line2D([0], [0], color='green', lw=4, label='Levels of Knowing')

knowledge_patch = plt.Line2D([0], [0], color='blue', lw=4, label='Levels of Knowledge')

consciousness_patch = plt.Line2D([0], [0], color='red', lw=4, label='Levels of Consciousness')

unknowing_patch = plt.Line2D([0], [0], color='purple', lw=4, label='Levels of Unknowing')

unknown_patch = plt.Line2D([0], [0], color='orange', lw=4, label='Levels of Unknowns')

unconscious_patch = plt.Line2D([0], [0], color='yellow', lw=4, label='Levels of Unconsciousness')

altitudes_patch = plt.Line2D([0], [0], color='pink', lw=4, label='Wilber\'s Altitudes')

integral_patch = plt.Line2D([0], [0], color='#FFD700', lw=4, label='Aurobindo\'s Integral Yoga Stages')

input_path_patch = plt.Line2D([0], [0], color='orange', lw=4, label='Input Data Path')

plt.legend(handles=[input_patch, knowledge_patch, consciousness_patch, unknowing_patch, unknown_patch, unconscious_patch, altitudes_patch, integral_patch, input_path_patch], loc='lower left')

plt.title("Deep Layer Neural Network Map with Comprehensive Levels and Input Data Path")

plt.show()

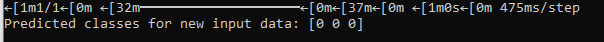

Script Outputs:

The predicted classes output.

DLKNN NLP MODEL

import tensorflow as tf

from tensorflow.keras.models import Sequential, load_model

from tensorflow.keras.layers import Dense, Input

from tensorflow.keras.preprocessing.text import Tokenizer

from tensorflow.keras.preprocessing.sequence import pad_sequences

import numpy as np

import json

# Load and preprocess data

def load_data(file_path):

texts = []

labels = []

with open(file_path, 'r') as file:

for line in file:

data = json.loads(line)

texts.append(data['text'])

labels.append(data['label'])

return texts, labels

# Tokenize and pad sequences

def preprocess_texts(texts, max_len):

tokenizer = Tokenizer()

tokenizer.fit_on_texts(texts)

sequences = tokenizer.texts_to_sequences(texts)

word_index = tokenizer.word_index

data = pad_sequences(sequences, maxlen=max_len)

return data, word_index

# Convert labels to categorical

def preprocess_labels(labels, num_classes):

labels = np.array(labels)

labels = tf.keras.utils.to_categorical(labels, num_classes=num_classes)

return labels

# Define DLKNN architecture

def create_dlkkn_model(input_dim, output_dim, layer_configs):

model = Sequential()

model.add(Input(shape=(input_dim,)))

for i, config in enumerate(layer_configs):

layer_name = f"{config['name']}_{i}"

model.add(Dense(units=config['units'], activation='relu', name=layer_name))

model.add(Dense(units=output_dim, activation='softmax', name='Output_Layer'))

model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

return model

# Example layer configurations

layer_configs = [

{'name': 'Awareness', 'units': 32},

{'name': 'Recognition', 'units': 32},

{'name': 'Understanding', 'units': 64},

{'name': 'Intuition', 'units': 64},

{'name': 'Insight', 'units': 128},

{'name': 'Realization', 'units': 128},

{'name': 'Wisdom', 'units': 256},

{'name': 'Enlightenment', 'units': 256},

{'name': 'Factual_Knowledge', 'units': 64},

{'name': 'Conceptual_Knowledge', 'units': 64},

{'name': 'Procedural_Knowledge', 'units': 128},

{'name': 'Metacognitive_Knowledge', 'units': 128},

{'name': 'Empirical_Knowledge', 'units': 256},

{'name': 'Theoretical_Knowledge', 'units': 256},

{'name': 'Practical_Knowledge', 'units': 512},

{'name': 'Philosophical_Knowledge', 'units': 512},

{'name': 'Spiritual_Knowledge', 'units': 1024},

{'name': 'Transcendental_Knowledge', 'units': 1024},

{'name': 'Physical_Consciousness', 'units': 64},

{'name': 'Emotional_Consciousness', 'units': 128},

{'name': 'Mental_Consciousness', 'units': 256},

{'name': 'Subconscious', 'units': 128},

{'name': 'Superconscious', 'units': 64},

{'name': 'Cosmic_Consciousness', 'units': 32},

{'name': 'Transpersonal_Consciousness', 'units': 16},

{'name': 'Higher_Self_Consciousness', 'units': 8},

{'name': 'Christ_Consciousness', 'units': 4},

{'name': 'Unity_Consciousness', 'units': 2},

{'name': 'Non_Dual_Consciousness', 'units': 1},

{'name': 'Infrared_Archaic', 'units': 32, 'color': 'darkred'},

{'name': 'Magenta_Magic', 'units': 32, 'color': 'magenta'},

{'name': 'Red_Power', 'units': 64, 'color': 'red'},

{'name': 'Amber_Mythic', 'units': 64, 'color': 'orange'},

{'name': 'Orange_Scientific', 'units': 128, 'color': 'darkorange'},

{'name': 'Green_Sensitive', 'units': 128, 'color': 'green'},

{'name': 'Teal_Integrative', 'units': 256, 'color': 'teal'},

{'name': 'Turquoise_Holistic', 'units': 256, 'color': 'turquoise'},

{'name': 'Indigo_HigherMind', 'units': 64, 'color': 'indigo'},

{'name': 'Violet_IlluminatedMind', 'units': 128, 'color': 'blueviolet'},

{'name': 'Ultraviolet_Overmind', 'units': 256, 'color': 'purple'},

{'name': 'ClearLight_Supermind', 'units': 512, 'color': 'white'},

{'name': 'Psychic_Being', 'units': 64, 'color': '#FFD700'},

{'name': 'Spiritual_Mental_Being', 'units': 128, 'color': '#C0C0C0'},

{'name': 'Higher_Mental_Being', 'units': 256, 'color': '#E5E4E2'},

{'name': 'Illumined_Mind', 'units': 512, 'color': 'blue'},

{'name': 'Intuitive_Mind', 'units': 1024, 'color': 'lightblue'},

{'name': 'Overmind', 'units': 2048, 'color': 'violet'},

{'name': 'Supermind', 'units': 4096, 'color': 'white'}

]

# Load data

texts, labels = load_data(r'C:\Users\myspi\Downloads\data.jsonl')

max_len = 100 # Adjust based on your dataset

num_classes = len(set(labels))

# Preprocess data

data, word_index = preprocess_texts(texts, max_len)

labels = preprocess_labels(labels, num_classes)

# Create DLKNN model

model = create_dlkkn_model(input_dim=max_len, output_dim=num_classes, layer_configs=layer_configs)

# Train the model

model.fit(data, labels, epochs=10, batch_size=32, validation_split=0.2)

# Save the model

model.save('LargeKnowingModel_NLP.keras')

Latest : DLKNN Neuron Model – train, save model

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt

import networkx as nx

# Custom Layer for Awareness Neurons

class AwarenessLayer(tf.keras.layers.Layer):

def __init__(self, units):

super(AwarenessLayer, self).__init__()

self.units = units

def build(self, input_shape):

self.kernel = self.add_weight(shape=(input_shape[-1], self.units),

initializer='glorot_uniform',

trainable=True)

def call(self, inputs):

awareness = tf.reduce_mean(inputs, axis=-1, keepdims=True) # Example of awareness: mean input

activation = tf.nn.relu(tf.matmul(inputs, self.kernel))

return activation * awareness

# Custom Layer for Reflective Neurons

class ReflectiveLayer(tf.keras.layers.Layer):

def __init__(self, units, **kwargs):

super(ReflectiveLayer, self).__init__(**kwargs)

self.units = units

def build(self, input_shape):

self.kernel = self.add_weight(name='kernel',

shape=(input_shape[-1], self.units),

initializer='uniform',

trainable=True)

super(ReflectiveLayer, self).build(input_shape)

def call(self, inputs):

input_units = inputs.shape[-1]

kernel_units = self.kernel.shape[0]

if input_units != kernel_units:

padding = tf.zeros([tf.shape(inputs)[0], kernel_units - input_units])

inputs = tf.concat([inputs, padding], axis=-1)

return tf.nn.relu(tf.matmul(inputs, self.kernel))

def compute_output_shape(self, input_shape):

return (input_shape[0], self.units)

# Custom Layer for Intentional Neurons

class IntentionalLayer(tf.keras.layers.Layer):

def __init__(self, units, goal):

super(IntentionalLayer, self).__init__()

self.units = units

self.goal = goal

def build(self, input_shape):

self.kernel = self.add_weight(shape=(input_shape[-1], self.units),

initializer='glorot_uniform',

trainable=True)

def call(self, inputs):

intention = tf.reduce_mean(inputs, axis=-1, keepdims=True) * self.goal

activation = tf.nn.relu(tf.matmul(inputs, self.kernel))

return activation * intention

# Custom Layer for Emotional Neurons

class EmotionalLayer(tf.keras.layers.Layer):

def __init__(self, units):

super(EmotionalLayer, self).__init__()

self.units = units

def build(self, input_shape):

self.kernel = self.add_weight(shape=(input_shape[-1], self.units),

initializer='glorot_uniform',

trainable=True)

def call(self, inputs):

emotions = tf.reduce_mean(inputs, axis=-1, keepdims=True)

activation = tf.nn.relu(tf.matmul(inputs, self.kernel))

return activation * emotions

# Create and compile the model

input_dim = 100 # Example input dimension

output_dim = 10 # Example output dimension

model = tf.keras.Sequential()

model.add(tf.keras.Input(shape=(input_dim,)))

model.add(AwarenessLayer(units=32))

model.add(ReflectiveLayer(units=64))

model.add(IntentionalLayer(units=128, goal=0.5))

model.add(EmotionalLayer(units=256))

model.add(tf.keras.layers.Dense(units=output_dim, activation='softmax', name='Output_Layer'))

model.compile(optimizer='adam', loss='categorical_crossentropy', metrics=['accuracy'])

model.summary()

# Function to classify inputs using the trained model

def classify_inputs(model, input_data):

predictions = model.predict(input_data)

predicted_classes = np.argmax(predictions, axis=1)

return predicted_classes

# Visualization function for the neural network

def draw_neural_network(ax, layers, labels, colors, input_data=None):

v_spacing = 1.5 / float(max(layers))

h_spacing = 1.0 / float(len(layers) - 1)

radius = v_spacing / 4

for i, (layer, label_group, color_group) in enumerate(zip(layers, labels, colors)):

for j in range(layer):

circle = plt.Circle((i * h_spacing, 1 - j * v_spacing), radius, color=color_group[j], ec='k', zorder=4)

ax.add_artist(circle)

if i == 0:

label = f'Input {j+1}'

elif i == len(layers) - 1:

label = f'Output {j+1}'

else:

label = label_group[j]

ax.text(i * h_spacing, 1 - j * v_spacing + radius, label, ha='center', fontsize=8)

for i, (layer_a, layer_b) in enumerate(zip(layers[:-1], layers[1:])):

for j in range(layer_a):

for k in range(layer_b):

line = plt.Line2D([i * h_spacing, (i + 1) * h_spacing],

[1 - j * v_spacing, 1 - k * v_spacing], c='k')

ax.add_artist(line)

# Draw the path of the input data

if input_data is not None:

for data_point in input_data:

x_pos = 0

for i, value in enumerate(data_point):

if i % 10 == 0:

x_pos += 1

y_pos = 1 - (i % 10) * v_spacing

circle = plt.Circle((x_pos * h_spacing, y_pos), radius / 2, color='orange', ec='r', zorder=5)

ax.add_artist(circle)

# Visualization of the neural network

fig = plt.figure(figsize=(20, 12))

ax = fig.gca()

ax.axis('off')

# Define the number of neurons in each layer and their labels

layers = [8, 10, 11, 11, 11, 11, 12, 7]

labels = [

["Awareness", "Recognition", "Understanding", "Intuition", "Insight", "Realization", "Wisdom", "Enlightenment"],

["Factual Knowledge", "Conceptual Knowledge", "Procedural Knowledge", "Metacognitive Knowledge", "Empirical Knowledge", "Theoretical Knowledge", "Practical Knowledge", "Philosophical Knowledge", "Spiritual Knowledge", "Transcendental Knowledge"],

["Physical Consciousness", "Emotional Consciousness", "Mental Consciousness", "Subconscious", "Superconscious", "Cosmic Consciousness", "Transpersonal Consciousness", "Higher Self Consciousness", "Christ Consciousness", "Unity Consciousness", "Non-Dual Consciousness"],

["Ignorance", "Misunderstanding", "Confusion", "Doubt", "Denial", "Skepticism", "Agnosticism", "Mystery", "Paradox", "Uncertainty", "Unawareness"],

["Personal Unknowns", "Interpersonal Unknowns", "Scientific Unknowns", "Historical Unknowns", "Cultural Unknowns", "Technological Unknowns", "Environmental Unknowns", "Cosmic Unknowns", "Spiritual Unknowns", "Metaphysical Unknowns", "Philosophical Unknowns"],

["Repression", "Suppression", "Subconscious", "Collective Unconscious", "Sleep", "Dream State", "Coma", "Trance", "Automatic Behavior", "Amnesia", "Psychological Blind Spots"],

["Infrared", "Magenta", "Red", "Amber", "Orange", "Green", "Teal", "Turquoise", "Indigo", "Violet", "Ultraviolet", "Clear Light"],

["Psychic Being", "Spiritual Mental Being", "Higher Mental Being", "Illumined Mind", "Intuitive Mind", "Overmind", "Supermind"]

]

# Define the colors for each layer

colors = [

['green'] * 8,

['blue'] * 10,

['red'] * 11,

['purple'] * 11,

['orange'] * 11,

['yellow'] * 11,

['darkred', 'magenta', 'red', 'orange', 'darkorange', 'green', 'teal', 'turquoise', 'indigo', 'blueviolet', 'purple', 'white'],

['#FFD700', '#C0C0C0', '#E5E4E2', 'blue', 'lightblue', 'violet', 'white']

]

# Visualization of the neural network with input data path

draw_neural_network(ax, layers, labels, colors)

# Add legend

input_patch = plt.Line2D([0], [0], color='green', lw=4, label='Levels of Knowing')

knowledge_patch = plt.Line2D([0], [0], color='blue', lw=4, label='Levels of Knowledge')

consciousness_patch = plt.Line2D([0], [0], color='red', lw=4, label='Levels of Consciousness')

unknowing_patch = plt.Line2D([0], [0], color='purple', lw=4, label='Levels of Unknowing')

unknown_patch = plt.Line2D([0], [0], color='orange', lw=4, label='Levels of Unknowns')

unconscious_patch = plt.Line2D([0], [0], color='yellow', lw=4, label='Levels of Unconsciousness')

altitudes_patch = plt.Line2D([0], [0], color='pink', lw=4, label='Wilber\'s Altitudes')

integral_patch = plt.Line2D([0], [0], color='#FFD700', lw=4, label='Aurobindo\'s Integral Yoga Stages')

input_path_patch = plt.Line2D([0], [0], color='orange', lw=4, label='Input Data Path')

plt.legend(handles=[input_patch, knowledge_patch, consciousness_patch, unknowing_patch, unknown_patch, unconscious_patch, altitudes_patch, integral_patch, input_path_patch], loc='lower left')

plt.title("Deep Layer Neural Network Map with Comprehensive Levels and Input Data Path")

plt.show()

# Example of new input data (replace with your actual data)

new_input_data = np.array([

[0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0,

0.1, 0.2, 0.3, 0.4, 0.5, 0.6, 0.7, 0.8, 0.9, 1.0],

[0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0,

0.5, 0.4, 0.3, 0.2, 0.1, 0.6, 0.7, 0.8, 0.9, 1.0],

[1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 1.0, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1,

1.0, 0.9, 0.8, 0.7, 0.6, 0.5, 0.4, 0.3, 0.2, 0.1]

], dtype=np.float32)

# Classify the new input data

predicted_classes_new_input = classify_inputs(model, new_input_data)

# Print the predicted classes for the new input data

print("Predicted classes for new input data:", predicted_classes_new_input)

# Define training data (example)

train_input_data = np.random.rand(1000, input_dim).astype(np.float32)

train_output_data = np.random.randint(0, output_dim, 1000)

train_output_data = tf.keras.utils.to_categorical(train_output_data, num_classes=output_dim)

# Train the model

model.fit(train_input_data, train_output_data, epochs=10, batch_size=32)

# Save the model

model.save('LargeKnowingModel-noalgonlp.keras')

# Visualize the network with new input data

fig = plt.figure(figsize=(20, 12))

ax = fig.gca()

ax.axis('off')

draw_neural_network(ax, layers, labels, colors, input_data=new_input_data)

plt.title("Deep Layer Neural Network Map with Comprehensive Levels and Input Data Path")

plt.show()

Peace!

Sources: GPT 4o

Stay in the NOW with Inner I Network;

Leave a comment